Sharing Responsibility Productively

With Both Humans & Artificial Intelligence

I grumbled last week about an idea I originated failing to meet the 25-year rule – the tendency for a new idea to require a quarter century to take hold. But this week, I had an example of how it can work and it was in relation to something I wrote 24 years ago on taking responsibility. That is the subject of this week’s original Playing to Win/Practitioner Insights (PTW/PI) piece, which is called Sharing Responsibility Productively: With Both Humans & Artificial Intelligence. As always, you can find all the previous PTW/PI here.

Background

In 2002, I published my first book, The Responsibility Virus: How Control Freaks, Shrinking Violets – and the Rest of Us – Can Harness the Power of True Partnership. Pretty much everybody who read the book seemed to like it. I get endless great feedback on it. I think it is the favorite book of my longtime literary agent, Tina Bennett, who keeps wanting me to do a new edition. However, it was my first book – so I wasn’t known as an author yet – and it was poorly written – I hadn’t figured out yet how to write an easy-to-read, engaging book. It made readers work too hard. Unsurprisingly, it didn’t sell at all well. After 24 years, I think it is still under ten thousand copies – ugh.

The book was published relatively shortly after I started my term as dean of Rotman School of Management at University of Toronto in 1998. I inherited a star JD/MBA, Daniel Debow, who had started in 1996 and graduated in 2000. He has gone on to a spectacular career of serial entrepreneurship and angel investing. We became friends and co-investors, and I chaired a couple of his companies.

Daniel was one of that small cadre of enthusiastic readers of The Responsibility Virus and fifteen years later in 2017, he wrote a Medium article that drew from it: How to be an effective early stage employee. Hint: be helpful. In it, he adapted the central framework from the book (with full credit – thanks Daniel) to discuss about how a junior employee in a start-up could take varying levels of responsibility, which was illustrated by Daniel’s modification of the graphic at the head of this article.

His Medium piece dramatically increased the distribution of the idea in the article, probably by five to ten times the book based on likes and views.

Then another eight years later, Daniel’s entire chart was picked up and pasted into the popular Big Ideas 2026 Andreessen Horowitz a16z podcast – with attribution to Daniel; good for them. It is closing in on 100K views already. So maybe the idea is getting to breakthrough level, which is cool. And there is still one year to go on the 25-year rule!

The Original Idea

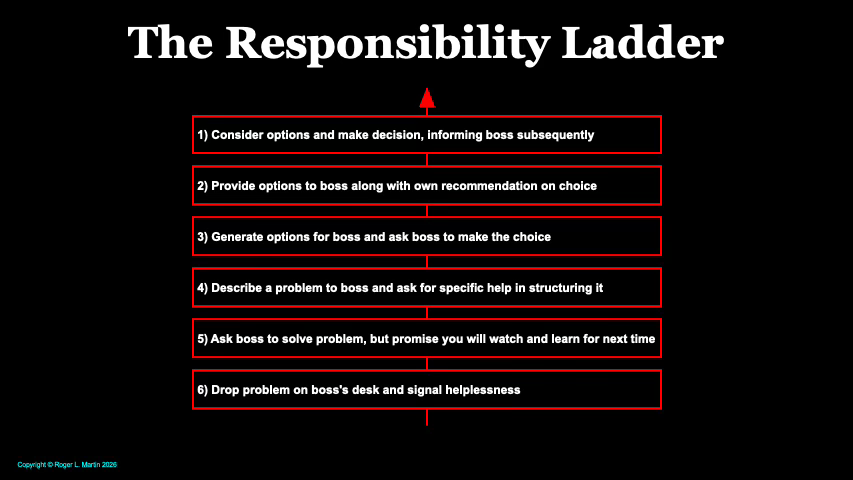

The Responsibility Virus book is about mis-assignment of responsibility. It posited that the two dominant apportionments of responsibility are: I am in charge & you are not, and you are in charge & I am not. It further argued that these zero-sharing alternatives are rarely an effective division of responsibility. Instead, it creates extremes of unhelpful over and under-responsibility. Better is a more nuanced approach with gradients of responsibility, which is illustrated in The Responsibility Ladder shown at the top of this piece. Rather than I’m in charge or you are, there are a variety of divisions of responsibility that create far more productive sharing of responsibility across the parties.

I have written about this before in this series, but that was from the perspective of the leader in the context of Strategic Choice Chartering. However, the Ladder can be used in both directions. It can be used by a leader in doling out responsibilities or, and especially if the leader isn’t good at it, by a junior person taking on responsibility.

The latter is the context for Daniel’s article, which focused on advice on how to be effective as a junior person. Daniel took two of my rungs (my 4 and 5) and merged them into one (his 2) which does no harm to the model. And we number the rungs in the reverse – a lower number connotes more responsibility in mine, and the reverse in his – again, no damage done.

However, his core prescription is a bit different than mine. His advice is to go higher up the ladder – the top rung is best. Consistent with the core message of the book, I am a little more nuanced. Relative to the task at hand, pick the right ladder rung based on your skills and make that intention transparent to your boss – your boss needs to know with what you are coming back. Is it options, is it a recommendation, is it a report back on the answer?

The bottom rung is always a bad idea. You won’t be valuable and you won’t learn a thing acting helpless on that rung. At least move up to the next rung if you are flummoxed by the task. That is, ask for help but promise you will learn for next time. If you are somewhat confused, but with a little help, you can get somewhere – go up another rung and ask for help structuring the task and then take it on. If you feel more confident, go up another rung and provide options. If you are still more confident, go up yet another rung and give your recommendation. And if you are highly confident, take the top rung: make the decision and inform your boss after the fact.

The key is to recognize that your optimal level of responsibility is going to differ by task. Some tasks are harder than others. And some have more serious organizational consequences than others.

Though we have some differences in the how, my overall goal is the same as Daniel’s: get up the ladder as high as possible as fast as possible. And when you are consistently operating at the top rung, you should have your boss’s job – which should free your boss up to take the next higher job.

Andreessen Horowitz a16z Podcast

The Andreessen Horowitz podcast puts forward three ideas for 2026, the first of which is “The Death of the Prompt Box” (the first 4 minutes) presented by Marc Andrusko, an investing partner. His idea is the following:

“2026 marks the death of the prompt box for mainstream users. The next wave of AI apps will have zero visible prompting – they’ll observe what you’re doing and intervene proactively with actions for you to review. “

He puts up Daniel’s version of the ladder (citing Daniel, bless Andrusko) and declares there are five types of employees – each defined by operating at one of the rungs of Daniel’s version of the ladder. And of course, the most valuable employees are the top-rung employees. Then he suggests that the future will be AI apps at the top rung – performing the task without asking or even being prompted by the human operator.

My View

I totally get what the AI enthusiasts wish to have happen. They wouldn’t be AI enthusiasts if they weren’t excited about AI’s possibilities. But I think neither are they correct nor what they suggest is, in fact, desirable. Having AI operating on the top rung of The Responsibility Ladder – making decisions and informing the human after the fact – is like all subordinates thinking that for all tasks, they should be on the top rung, and that all bosses want that from their subordinates all the time.

That is a recipe for disaster for subordinates and bosses alike. The reason, which I argue as the core point of the book, is that responsibility sharing is not a one-size-fits-all proposition. It is context-specific, and each context – i.e. each task – requires a customized division. Otherwise, you will get damaging over and under-responsibility – in the case of the Andreessen Horowitz dream, under-responsible humans driving over-responsible AI.

Until somebody can convince me otherwise, I will continue to characterize LLM-AI as a mode-seeking engine (reprised here in the All-Stars series). AI is not a right-tail hunter. If your task is to seek the mode (or mean or median) of a distribution, then AI will do it cheaper, faster and more accurately than any human. For example, what flavor of dough should I use for my wedding cake? AI will tell you vanilla because it is the most frequent answer. If I was the boss and had a task of that sort, I would use AI at the top rung – choose and tell me later – or if it was mission-critical, the second top – recommend and let me ratify (or not).

But if the task is to find a solution as far out on the right tail of the distribution as possible, I would never assign AI to the top rung – ever – because AI doesn’t even know how to try to find such a solution. In fact, I wouldn’t ask it to take second-rung responsibility either – I don’t want it to recommend a modal solution masquerading as a right-tail one.

I might ask it to generate options (third rung down) as food for thought for me. But more likely, I would take the lead and ask it to provide critiques of my thinking along the way. But that would take active prompting on my part, meaning Mr. Andrusko’s death-wish for the prompt box wouldn’t happen for me.

That having been said, even though I don’t entirely concur with the Andreessen Horowitz podcast, I am delighted that The Responsibility Ladder is getting out there more broadly and that it has utility in thinking about AI.

Practitioner Insights

The assignment and sharing of responsibility in business organizations (and arguably the world in general, but I will stick with business for purposes of this piece) is sloppily done. People seem most comfortable with the simplistically polar view that the two choices are I am in charge & you are not, or you are in charge & I am not. Responsibility sharing works much better if there is a more subtle view – which is why I created The Responsibility Ladder with its six nuanced rungs of responsibility.

If I adopt the perspective of my fabulous student Daniel Debow – that of a young person starting out in a company – recognize that on any task, there are multiple levels of responsibility you can take. Don’t operate at Level 6 because that is complete failure and Level 5 is always available instead. Always practice moving up the ladder, starting with easier tasks first and moving on to ever-harder ones. Getting to Level 1 carefully is better than attempting to do so recklessly

Always communicate with your boss. You and your boss will work more productively together if you both agree on the sharing of responsibility. For example, don’t decide to act at Level 1 when your boss is expecting and really wants Level 2 or 3.

And bosses, you should use the Ladder proactively with your subordinates. It is easier for you to start this kind of conversation than to force your subordinate to initiate.

Finally, think of sharing responsibility with AI using The Responsibility Ladder. Where the task is to find a modal answer, give AI responsibility high on the Ladder. Where the task is to find a unique and/or right tail solution, keep AI much lower on the ladder and keep much more of the responsibility to yourself, or share it with another human!

The modal/right-tail distinction is sharp. Most of the AI debate is about capability. This reframes it as fit. That's a meaningfully different question, and a more useful one.

The framework leaves open one gap: it resolves who takes responsibility, not what happens after.

Even for tasks where the leader must stay high on the Ladder, there are two distinct challenges. The first is deciding. The second is ensuring that decision travels intact to the people who must act on it.

You flag the problem without quite naming it: don't operate at Level 1 when your boss expects Level 2. But that gap, between what the responsible person decides and what the organisation receives and acts on, runs in both directions and at every level of the hierarchy.

The Ladder gives a vocabulary for assigning ownership. It doesn't give a mechanism for ensuring what's owned gets transmitted with sufficient precision.

A leader can still fail, not because they made the wrong decision, but because the people downstream received something different from what was intended. The Ladder solves the governance question. It assumes the communication question is settled elsewhere.

In most organisations, it isn't.

The harder question, and the one the AI debate tends to skip: not just who decides, but how does what was decided land?

Curious if you've come across Jurgen Appelo's Delegation Poker where he shifted a similar model into an explicit tool for forming working agreements - I've found it a great way of strengthening relationships both vertically in the hierarchy and horizontally with peers over the years: https://management30.com/practice/delegation-poker/